Last week I flippantly posted on the forums that I was thinking of shutting them down. People obviously didn’t like this news.

My reasons are probably obvious. I’m not sure small forums are still needed. I’m not sure whether something like reddit makes them redundant for things like discussing a specific subject, and things like discord make them redundant for things like idle chatting.

Maybe not right now, but is that the way things are going? In 5 years, in 10 years?

Do I get anything from maintaining them? Does the company? Why are we paying to run and maintain a forum that brings us nothing but issues?

I get that there’s a community of people there who know each other and have been communicating this way for 15 years, and that’s a precious thing, are you still going to be there in 5 years? 10 years?

Hand it over to someone who wants to manage it?

I don’t know if this is wise from an ethical or legal point of view. We have 15 years of forum activity – 32 million posts. Can you hand that over just willy nilly? Do we need to pay a bunch of money to a lawyer to find out?

Plus what’s the benefit? Why do you need all of the old posts to relocate?

Downtime

So what a coincidence that with all that spinning around on the forums they go down. I woke up on Saturday morning to a ton of pingdom emails. I got to my computer to find the database wasn’t responding.

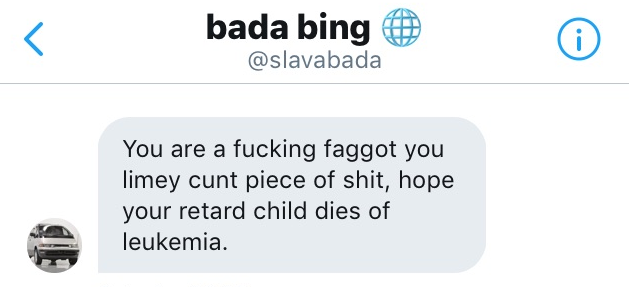

Discord was blowing up with everyone talking about how unfair it was to just turn the forums off with only a few days warning, how Garry is a selfish childish arsehole.

I checked Azure’s status page and they’d had an issue with the storage system. Relieved that it wasn’t something I’d done, I left it for a while, it’d come back up on its own.

Support

It didn’t come back after a few hours so I put up a “Sorry” page explaining that the database was down – as a way to inform everyone that it hadn’t just been deleted. And I fired off a support request.

Support informed me that they were working with the team in the US. Saturday turned into Sunday and still nothing. Support let me know that the ticket was moderate severity so they were working business hours. I asked them if business hours meant “not weekends” but didn’t get a reply.

No Backups

Thinking through the worst case I came to realise I had no backups of the forum’s data. If it was fucked, it was fucked. The forums would close down for real because there wouldn’t be a way to bring it back. I’d purposely chosen to use an azure mssql database because they backed themselves up – I didn’t need to do anything. But if some fuck up and fucked up the data – we were fucked.

So I opened a new ticket with the top severity level. They phoned me within 5 minutes with a logmein link and watched me try some stuff, then more random phone calls during the day. At about 6pm they phoned and let me know that the problem should be fixed.

Later on they emailed me a post mortum:

The database first went through a reconfiguration around 13th Oct 3:26 am UTC due to a known xstore outage in the EastUS region. This outage caused the storage on this database to become unavailable and consequently caused the compute to go down. As the compute was going through recovery, one of the replicas got stuck in its transition to primary state. Due to a bug in the failure path caused by xstore outage, the transition was stuck. Restart of the compute replica mitigated the issue.

The following updateSLO/Maxsize operations were stalled behind the unhealthy compute and completed once the compute became healthy.

The bug in the failure path has been fixed and will be deployed in production in next few weeks

Future

I’m still considering what to do with the forums. Whatever I decide won’t happen overnight. I’m not just going to switch them off. It’ll happen early next year.

If the forums exist they need to be agile and evolving. I can’t be the chokepoint there, so there needs to be a way to let the developers in the community improve it themselves.

I’m leaning towards making the front-end site a SPA with a websocket connection and accepting pull requests to it. We could COORS localhost so people can develop locally but with the live data, and any legit community alternatives could also be COORS’d.

That’d make the backend the only chokepoint – which is a tiny part of it.

Add a Comment